This article is part of the 7-part Testing LangGraph Applications series. The examples come from the langgraph-testing-demo repository.

Testing LangGraph Applications Series

- Stop Testing AI Outputs. Start Testing State

- How to Structure LangGraph Tests That Actually Scale

- Testing Isn’t Enough: Evaluating LangGraph Workflows That Actually Work

- Testing Parallel LangGraph Workflows Without Losing Control

- Understanding LangGraph Workflows with LangSmith Traces and pytest

- Command vs Send in LangGraph: Choosing the Right Primitive

- What It Takes to Build Production-Ready LangGraph Systems ← You are here

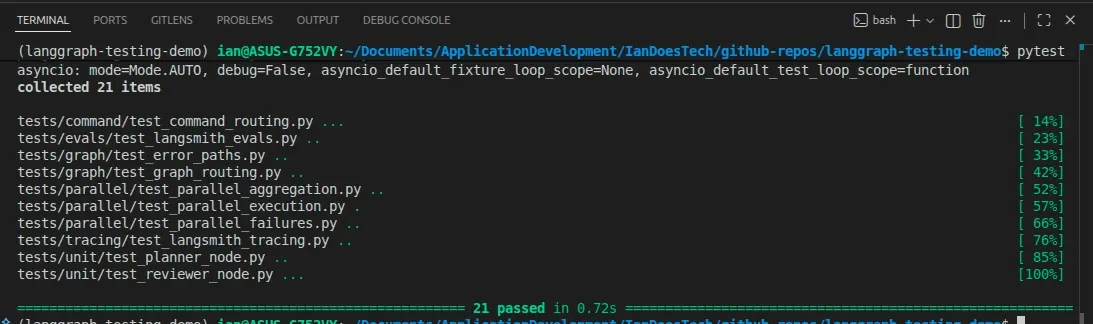

All examples in this series are backed by a comprehensive pytest suite covering unit, graph, failure, parallel, evaluation, and tracing scenarios:

Over the course of this series, we’ve built up a LangGraph system step by step:

- treating workflows as state machines

- structuring pytest suites

- separating testing vs evaluation

- adding parallelism with Send

- enabling observability with LangSmith

- understanding Command vs Send

Individually, each of these topics is useful.

Together, they form something more important:

A repeatable way to build production-ready AI systems.

This final article ties everything together.

The Problem with Most AI Systems

Most AI demos look impressive.

They:

- call a model

- produce a result

- maybe call a tool

But they usually lack:

- structure

- testability

- observability

- clear control flow

Which means:

- they break silently

- they are hard to debug

- they don’t scale

- they can’t be trusted

LangGraph gives you the primitives to fix that, but only if you use them intentionally.

The Core Idea

A production-ready LangGraph system is not:

a chain of LLM calls

It is:

a state-driven system with explicit transitions, testable behavior, and observable execution

Everything in this series builds toward that idea.

1. State Is the System Contract

Your system is defined by its state.

Not just data, but control.

Examples from this repo:

review_status

research_results

branch_errors

aggregate_research

final_outputThese fields do more than store values:

- they define behavior

- they drive routing

- they expose system decisions

- they make failures visible

Without structured state, you can’t:

- test meaningfully

- debug effectively

- trace execution

2. Nodes Should Be Small and Predictable

Each node should behave like a function:

- clear inputs (state)

- clear outputs (state updates)

- no hidden side effects

That’s why in the repo:

- nodes return partial updates

- errors are captured explicitly

- logic is deterministic where possible

This makes nodes:

- easy to unit test

- easy to reason about

- easy to reuse

3. Testing Is Layered, Not Monolithic

A production system doesn’t rely on one type of test.

We used three layers:

Unit tests

- validate node logic

- fast and deterministic

Graph tests

- validate routing and state transitions

- ensure correct behavior

Failure tests

- simulate broken dependencies

- ensure safe termination

This separation is critical.

If everything is an integration test, nothing is debuggable.

For checkpointed or resumable graphs, add one more discipline: create the graph

inside the test and compile it with a fresh checkpointer for that test. That

keeps checkpoint state isolated while still letting you test interrupts,

update_state, time travel, and resume behavior directly.

4. Evaluation Measures Quality

Testing ensures correctness.

Evaluation ensures usefulness.

Using datasets:

input → run graph → score outputlets you:

- detect regressions

- compare versions

- iterate with confidence

Without evaluation, improvement becomes guesswork.

5. Parallelism Adds Power and Complexity

With Send, you can scale work:

tasks → Send → same node → aggregationThis enables:

- research pipelines

- retrieval systems

- batch processing

But it also introduces:

- partial failures

- ordering issues

- aggregation logic

Which means you must test:

- number of results

- merge correctness

- failure handling

Parallelism is where many systems become fragile.

6. Orchestration Requires Intent

With Command, you control flow:

intent → Command → send_email / send_slackThis enables:

- multi-action workflows

- tool routing

- orchestration logic

The key insight:

Senddistributes workCommandcontrols behavior

Choosing the right primitive keeps your graph:

- readable

- testable

- extensible

7. Observability Makes Systems Understandable

Even with tests and evaluation, you still need:

visibility into real runs

LangGraph + LangSmith gives you this out of the box.

By enabling tracing:

export LANGSMITH_TRACING=true

export LANGSMITH_API_KEY=...you can see:

- which nodes executed

- how state evolved

- where branching occurred

- how parallel runs behaved

The important part:

Good traces come from good design.

If your:

- state is meaningful

- nodes are clear

- routing is explicit

then your traces will tell a useful story.

8. Everything Works Together

These concepts are not separate.

They reinforce each other:

- State design → enables testing and tracing

- Small nodes → enable unit testing

- Testing → ensures correctness

- Evaluation → ensures quality

- Parallelism → scales capability

- Command vs Send → keeps control flow clean

- Tracing → makes everything observable

Remove one, and the system weakens.

A Practical Checklist

If you’re building a LangGraph system, ask:

Structure

- Is my state explicit and meaningful?

- Are my nodes small and predictable?

Testing

- Do I have unit tests for nodes?

- Do I test routing and failures?

Quality

- Do I evaluate outputs with a dataset?

Scale

- Am I using

Sendcorrectly for parallel work?

Control

- Am I using

Commandfor orchestration?

Observability

- Can I trace and understand a real run?

If the answer is “no” to any of these, that’s your next improvement.

What This Means in Practice

With this approach, your system becomes:

- predictable → because behavior is tested

- measurable → because outputs are evaluated

- scalable → because parallelism is controlled

- maintainable → because structure is clear

- debuggable → because tracing is available

That’s the difference between:

a demo that works once and a system you can rely on

Final Thought

LangGraph doesn’t magically make systems production-ready.

It gives you the tools.

The difference comes from how you use them:

- design your state carefully

- structure your tests properly

- evaluate your outputs

- choose the right primitives

- make your system observable

Do that consistently, and you don’t just have an AI workflow.

You have a system you can trust.